Scale

Scaling is where VPUs earn their keep.

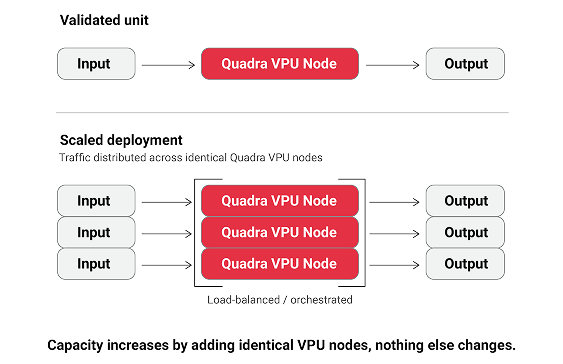

The purpose of this stage is to expand VPU deployment across workloads, regions, or environments with predictable economics and operational behavior.

Scaling is not about tuning for peak demos. It’s about repeatability under pressure.

What scaling actually means

Scaling with VPUs means:

- Adding streams without linear cost growth

- Increasing density without thermal surprises

- Expanding codecs without re-architecting

- Planning capacity without guesswork

This is where general-purpose compute fails and purpose-built silicon holds.

How to scale safely

Scaling follows the same principles as migration:

- Expand one dimension at a time:

- Streams, Codecs, Regions or Duration

- Validate stability before expanding again

- Keep rollback paths intact

The goal is predictable growth, not heroic firefighting.

Scaling triggers

Typical reasons teams scale VPUs:

- New codec rollout (AV1 at real volume)

- Traffic growth without power headroom

- Cost pressure from CPU/GPU saturation

- Geographic expansion

- Longer-duration workloads entering production

Scaling is driven by constraints, not ambition.

What “Good” looks like at scale

At scale, success looks like:

- Flat or declining cost per stream as volume increases

- Stable utilization across sustained runs

- No new operational tooling required

- No special handling for peak events

If scaling increases complexity, something is wrong.

The most common failures we see at this stage:

- Scaling volume before predictability

Early wins don’t guarantee operational control.

- Assuming results generalize automatically

Scale exposes differences, not averages.

- Relying on heroics instead of systems If scale requires exceptions, it isn’t ready.

Outputs of the Scale stage

When scaling is complete, you should have:

- VPUs deployed as a standard compute tier

- Documented capacity planning assumptions

- Predictable cost and power models

- Confidence to grow without re-evaluation

At this point, VPUs are now your infrastructure.