VPU Deployment Playbook

How to deploy VPUs with confidence through real data, proven architectures and radical technical transparency.

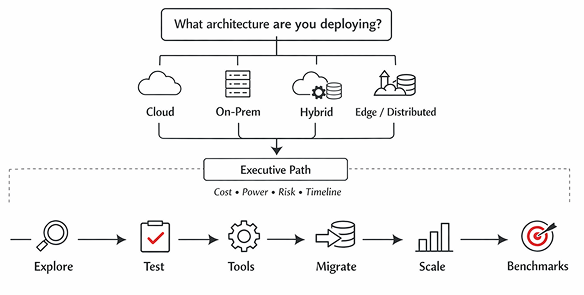

Deployment Overview

This playbook guides engineers from their current encoding architecture, to a scalable VPU deployment.

1. Where does your encoding run today?

Start with your current encoding environment and the constraints shaping your infrastructure.

Cloud

Encoding runs entirely inside public cloud compute environments.

Typical Pain Point:

Runaway compute costs.

Likely Path:

Repatriate heavy encoding workloads to VPU servers to cut costs.

On-Prem

Encoding runs in infrastructure you own and operate.

Typical Pain Point:

Density and power limits.

Likely Path:

Replace inefficient CPU racks with high-density VPU servers.

Hybrid

Encoding runs across both your infrastructure and public cloud.

Typical Pain Point:

Workload orchestration complexity.

Likely Path:

Run baseline workloads on VPUs and burst to cloud only when necessary.

Edge / Distributed

Encoding runs across many regional locations close to viewers.

Typical Pain Point:

Hardware footprint limits and operational scale.

Likely Path:

Deploy compact VPU nodes at POP locations closer to users.

2. Choose your desired deployment architecture:

Determine your migration strategy where VPU encoding should run to deliver the best performance and economics.

VPU Cloud encoding:

- Delivers cost efficient at scale, without owning hardware

- Deploy encoding inside cloud infrastructure

On-Prem VPU encoding:

- Delivers operational control & predictability in hardware ownership

- Deploy dedicated encoding racks

Hybrid encoding:

- Enables gradual migration or variable workflows

- Mix on-prem VPU capacity with overflow cloud burst

Edge encoding:

- Delivers lowest latency workflow with minimal back haul

- Run encoding near users or POPs

VPU Hardware Servers:

Quadra Video Server

Ideal for large-scale transcoding deployments requiring maximum stream density.

Quadra Mini Server

Ideal for edge deployments, labs, and smaller production spaces.

VPUs:

T1M

OEM embedded system. Ideal for small space or edge deployments

Form factor: M.2

Fits Quadra Mini Server

T1U

General-purpose for data center encoding.

Form factor: U.2.

Fits ALL Quadra Servers

T1A

Ideal for power or thermal constrained deployments.

Form factor: AIC

Fits 1RU Quadra Video Server

T2A

Dual-chip for 2X power. Ideal for maximum density and lowest cost-per-stream.

Form factor: AIC

Fits 1RU Quadra Video Server

3. Design & Validate the Workflow

Confirm that your encoding pipeline, software stack, and Ecosystem partners work together seamlessly.

VPU Ecosystem Directory

Leverage our Ecosystem partners

- Find where each partner operates in the VPU video pipeline and leverage the tools they offer for deployment.

4. Deploy, Operate & Scale

Launch production VPU infrastructure with the tools to monitor, automate, and grow.

This includes:

- provisioning servers

- monitoring streams Bitstreams

- Workflow automation Bitstreams

- scaling capacity

- maintaining reliability

Area

workflow automation

performance monitoring

hardware health

stream management

Tools

Bitstreams

Bitstreams dashboard

VPU telemetry

Orchestration software

Outcome: The architecture becomes a production system that scales.